Narrow first, then expand

Every engagement starts with a single workflow. We earn the right to do more by proving the first one works.

Olyxee is an AI infrastructure company. We build systems that let organizations put AI to work across their operations - reliably, transparently, and at scale.

Mission

Most AI today advises. We believe it should execute.

Our mission is to close the gap between what AI can understand and what it can actually do inside an organization. Teams everywhere are stuck translating AI recommendations into manual work. We're building the layer that removes that gap entirely.

Vision

A world where AI quietly runs the operations that move organizations forward.

We see a future where the work of running a company - reconciling, coordinating, deciding, executing - happens on top of an AI infrastructure that any team can trust, audit, and direct in their own words.

Objectives

What we're working toward.

Make AI execute, not just advise - across real systems and real workflows.

Give every action an audit trail so teams can trust what runs in production.

Hide infrastructure complexity behind clear outcomes and simple controls.

Reach a thousand operating teams running on Olyxee by 2027.

Values

Every output, action, and decision is logged and reviewable. Trust is earned by being checkable.

We measure ourselves by what runs in the background, not by how loudly we announce it.

We earn the right to do more by making the first thing work end-to-end.

Real users, real workflows, real consequences. We design for the people on the hook.

Principles

Every engagement starts with a single workflow. We earn the right to do more by proving the first one works.

Every action an Olyxee agent takes is logged, traceable, and reviewable. Production AI without an audit trail is not production AI.

We measure success by what runs in the background, not by how loud we are. The work speaks for itself.

From the founder

We started Olyxee because the hardest part of AI isn't intelligence. It's getting that intelligence to actually do something useful.

The models are smart enough. What's missing is the infrastructure that lets them operate, connecting to real systems, executing real workflows, and doing it in a way teams can trust.

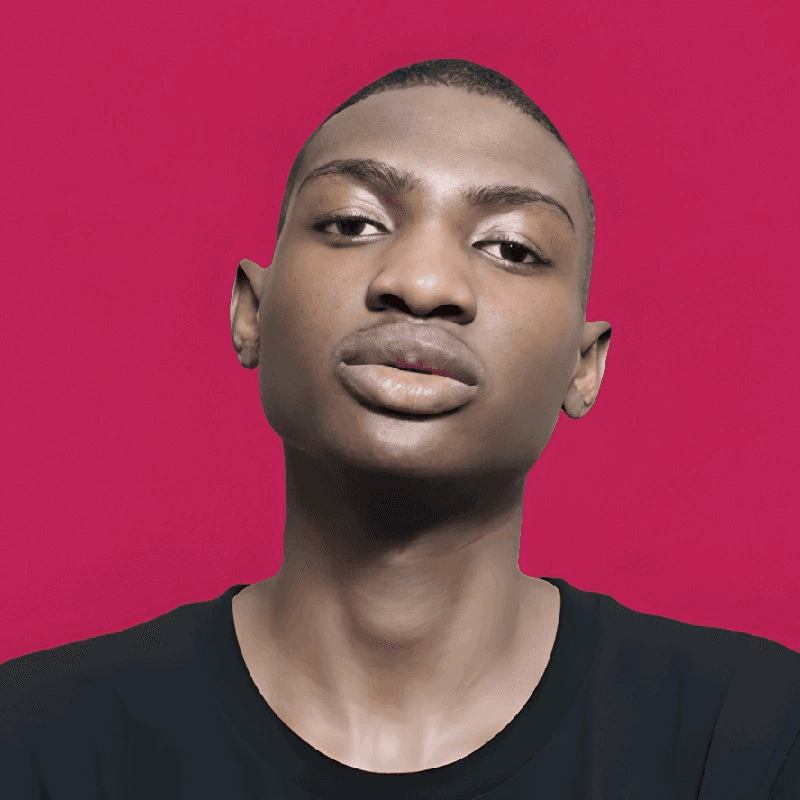

Lethabo Scofield

Founder & CEO

Join us

We're building a team of people who want to make AI work in the real world - not just in demos.